It has been a couple of years since my previous blog post about leap seconds, though I have been tweeting on the topic fairly frequently: see my page on date, time, and leap seconds for an index of threads. But Twitter now seems a lot less likely to stick around, so I’ll aim to collect more of my thinking-out-loud here on my blog.

falsehoods programmers believe about leap seconds

When programmers discuss the problems caused by leap seconds, they usually agree that the solution is to “just” keep time in TAI, and apply leap second adjustments at the outer layers of the system, in a similar manner to time zones.

Sadly, this simplification does not work, because there’s a mess of historical accidents and social and technical requirements that do not always align as we might wish.

reality bites

Fundamentally, computer systems need to work with civil time (i.e.

UTC) because it is baked into a huge number of standards: file

formats, protocols (including NTP), APIs (including POSIX),

etc. usw. It’s impossible to sweep all that away by saying “just use

TAI”: even the kernel has to support things like filesystem timestamps

in UTC, and it can’t deprecate POSIX time_t in APIs without a huge

ABI break.

POSIX time_t is often criticised as being “wrong” for

ignoring leap seconds. (NTP ignores leap seconds in the same manner,

but is hardly ever criticized for it.) However, calendaring

applications (and others that deal with times in the future) have to

ignore leap seconds so that they can schedule events more than 6

months ahead, when the difference TAI-UTC is unknown. So ignoring leap

seconds is not always wrong.

rubbery indiscretions

UTC has always been an awkward compromise between atomic time and mean solar time.

In the 1960s UTC kept in sync with the earth using a combination of small steps and rate adjustments (aka “rubber seconds”). This was problematic for radio broadcast time signals, because they keep a fixed relationship between the time signals and their carrier’s phase and frequency. So rate changes required retuning the transmitters.

As I understand it, UTC in the 1960s was mostly co-ordinated between the USA and UK; other countries had different ways of bridging between TAI and UT, such as “stepped atomic time”, which had a fixed rate with relatively frequent 100ms or 200ms jumps.

atomic quantum

Since the 1930s when we first developed clocks that run more regularly than the earth, there had been a gradual technocratic exploration and development of solutions for coping with the difference between UT (aka mean solar time, aka civil time) and our best most regular time scales (ET, TT, TAI, etc.).

This was suddenly put to a stop when Germany made a law implementing the new atomic definition of the SI second decided at the 1967 CGPM. The effect of this law was to forbid rubber seconds and stepped atomic time: adjustments had to be a whole number of SI atomic seconds. At the same time, celestial navigation was still important, so civil time needed to stay close to UT.

act in haste

Leap seconds were the dirty compromise solution. I say “dirty” because they were cooked up in back-room deals without the kind of open discussion and consultation you would expect for something so important. And it seems no-one was really happy with the compromise, but no-one could come up with a better solution that could be implemented in an acceptable timeframe.

So that’s how things have remained for the last 50 years. UTC isn’t a carefully engineered solution that considers how best to satisfy the accuracy requirements of different users of time and frequency. It’s an ugly hack.

regret at leisure

For the last 20ish years, the people responsible for implementing TAI and UTC have been trying to get rid of leap seconds. It isn’t just because, or even mainly because software is bad and programmers are too stupid to get UTC right. The impetus comes from people who run systems that have to get UTC right, and they think it is much more painful than necessary.

a zoo of committees

The governance around UTC is complicated. Formally, the ITU-R is responsible for the specification TF.460, and amendments need to be approved by the World Radiocommunication Conference which is an international treaty conference that happens every 3 or 4 years.

The practical implementation of UTC is the responsibility of the BIPM and the national time laboratories that contribute their measurements. The BIPM and its managing committee the CIPM implement the decisions of the CGPM, another quadrennial international treaty conference. Recommendations specifically about time and frequency are prepared by the CCTF, which liaises with the ITU-R, IAU, and other committees.

The scheduling of leap seconds is the responsibility of the IERS, which has a governing board that includes representatives from the International Astronomical Union (IAU), the International Union of Geodesy and Geophysics (IUGG), and the Global Geodetic Observing System (GGOS).

a timeline

WRC 2012 instructed the ITU-R to examine the future of UTC and leap seconds (resolution 653).

WRC 2015 decided not to make a change, but indicated that work should continue in co-operation with the CIPM, and report back at WRC 2023 (resolution 655).

CGPM 2018 approved the new SI, the completion of a huge project; they also found time to formally not decide about UTC, but that work should continue (resolution 2).

CCTF 2022 prepared a “Draft Resolution D” to be presented at the CGPM, with some informative explanatory notes.

CGPM 2022 approved draft D

resolution 4, which instructs the CIPM to consult with the ITU on

relaxing the 0.9s limit on UT1-UTC, so that there will be no leap

seconds for at least a century after 2035, subject to approval at CGPM

2026.

WRC 2023 should receive a report on the future of leap seconds (per WRC 2015 resolution 655) but as far as I can tell it isn’t on the agenda. I guess this means that the WRC will not make a decision about UTC and work will continue?

CGPM 2026 will make the final decision on whether the CCTF 2022 plan will go ahead.

practical consequences

At the moment, if you need a rough idea of earth angle (i.e. mean solar time, i.e. UT1), you can use UTC, which is accurate to within +/- 0.9s, or 0.46 km at the equator. TF.460 also specifies that time signals should broadcast DUT1 accurate to 0.1s, i.e. 46m at the equator. (DUT1 is the difference between UTC and UT1.)

If leap seconds are abolished, then people who need earth angle to that level of precision (a few hundred metres) will need to start adding DUT1 to the time on their clocks, or get their earth angle measurements elsewhere.

Abolishing leap seconds will require changes to the specification of radio signals (TF.460 and the various national time signals) to allow for larger values of DUT1. The new-ish GPS L5 signals allow DUT1 as large as +/- 127 seconds (see IS-GPS-705 section 20.3.3.5.1).

what about TAI?

Abolishing leap seconds will be helpful for users that have very tight accuracy requirements. UTC is the only timescale that is provided by national laboratories in metrologically traceable manner, i.e. in a way that provides real-time access, and allows users to demonstrate exactly how accurate their timekeeping is.

TAI is not directly traceable in the same way: it is only a “paper” clock, published monthly in arrears in Circular T as a table of corrections to the various national timescales. (See the explanatory supplement for more details).

The effect of this is that users who require high-accuracy uniformity and traceability have to implement leap seconds to recover a uniform timescale from UTC - not the other way round as the programmers’ falsehood would have it.

another timeline

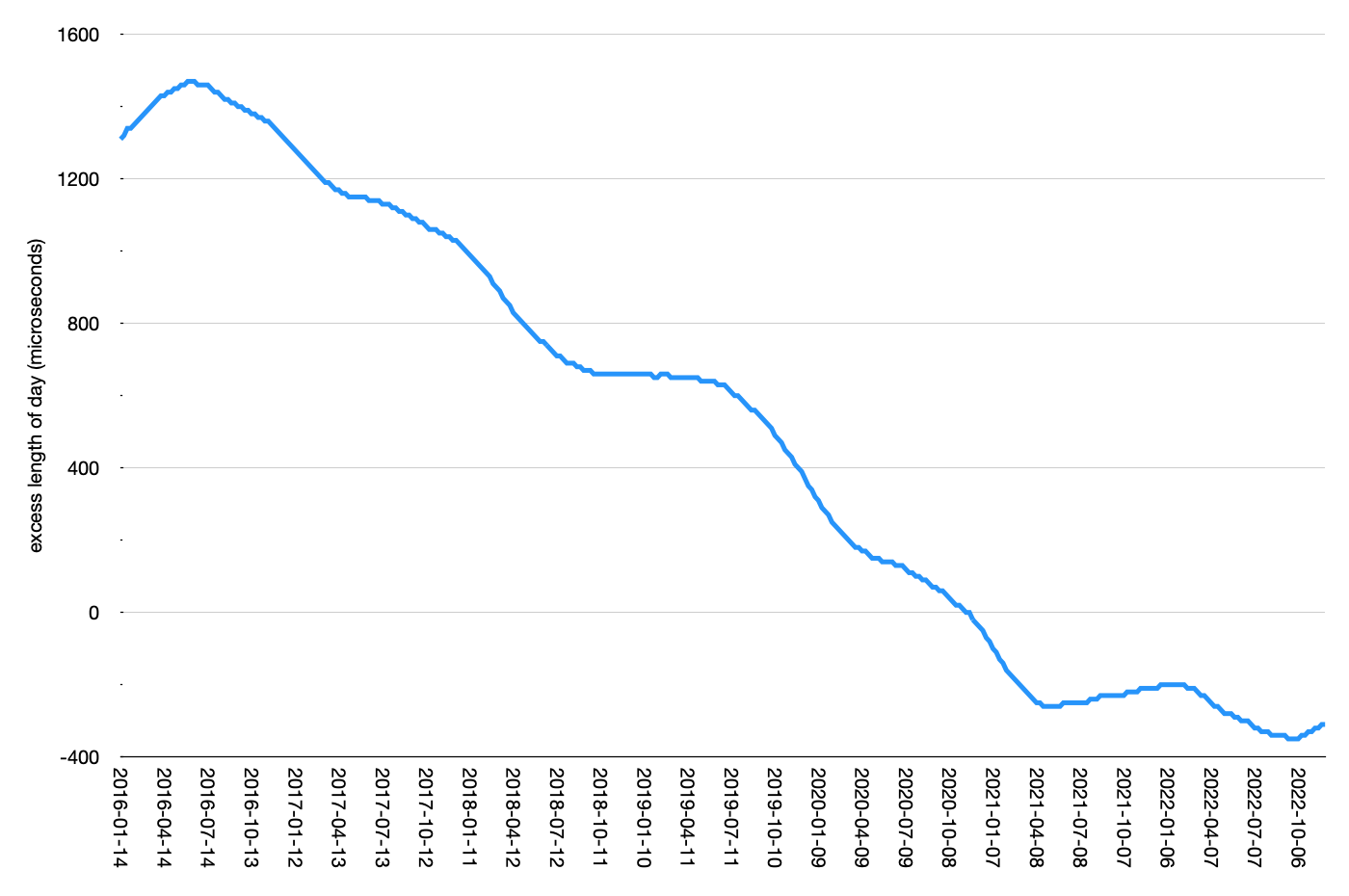

Concurrent with all this thrilling committee activity, the leap second hiatus continues. Below is a chart of the simple linear projection of the length of day from IERS Bulletin A.

The blue line has been below zero since the end of 2020, indicating

that the earth has been spinning faster than 24*60*60 seconds. The

line has been heading down (the earth speeding up) determinedly since

mid-2016, and though it has levelled off a bit in the last couple of

years, the earth is not clearly slowing down again.

My trivial guesstimate from this chart is that there will be a negative leap second in about five years, i.e. after CGPM 2026, but before the planned abolition of leap seconds in 2035.

But don’t listen to me! Demetrios Matsakis has made some projections based on a more well-informed and expertly constructed model, which suggests we might not have a negative leap second.

leap minute? leap hour?

In the long term, it’s likely that the bounds on DUT1 will be relaxed again in 100 years time, because a leap minute will be far too difficult. In the even longer term, we expect the earth to return to its trend of slowing down due to tidal forces from the moon.

Eventually, in 1000 years or so, DUT1 will grow to an hour, by which time it will be obvious that noon is not as close to 12:00 as it used to be. But, if we continue to use leap seconds as currently specified, in 1000 years or so we will need several leap seconds per year, and it will be difficult to keep DUT1 within its +/- 0.9 second bounds.

Maybe there will be a breakthrough in modelling and predicting the earth’s speed of rotation, in which case there could be a more fundamental reassessment of how every-day civil time relates to precise atomic time.

opinion

Whichever way, with or without leap seconds, our current timescales are clearly not the be all and end all forever amen, so I think it makes sense that they should be designed for practical utility. UTC is our timescale for both high precision metrology and every-day purposes; there are other better ways to get earth angle, so UTC doesn’t need to (try to, badly) do that job as well.

Tony Finch – blog

Tony Finch – blog